2D Architecture¶

NUKE’s 2D architecture is largely defined by the Iop class. This class inherits from Op, reimplementing _validate(), and extending functionality to add an image processing path. In turn Iop is extended and specialised by PixelIop and DrawIop to provide simplified implementations for specific classes of image processing operator.

Iop specifically defines how NUKE’s channel system works, how regions and interest and regions of definition are passed up and down the tree, how 2d data is cached, and how bounding boxes and image formats are dealt with.

Scan-line based¶

NUKE is a non-destructive node based, 32 bit float, multi-channel, scan-line image compositing system. You can think of the purpose of NUKE is to consume input images, perform image modification operations on them and then produce output images. In addition to the scan-line based 2D image system there are also a number of sub-systems available such as the 3D system, Deep Image compositing system and Particle system. In general these sub-systems do not operate on 2D images. However, their output mostly gets converted into a 2D image at some stage so that NUKE can produce a final 2D output image. For instance the 3D system gets converted into a 2D image by the ScanlineRender node at which stage the 3D image becomes part of the scan-line based image system.

This section will cover the fundamentals of the scan-line render system to give you an overview on how NUKE works. We will not cover the other sub-systems in detail here although many of the concepts are shared with the other subsystems.

A basic node graph¶

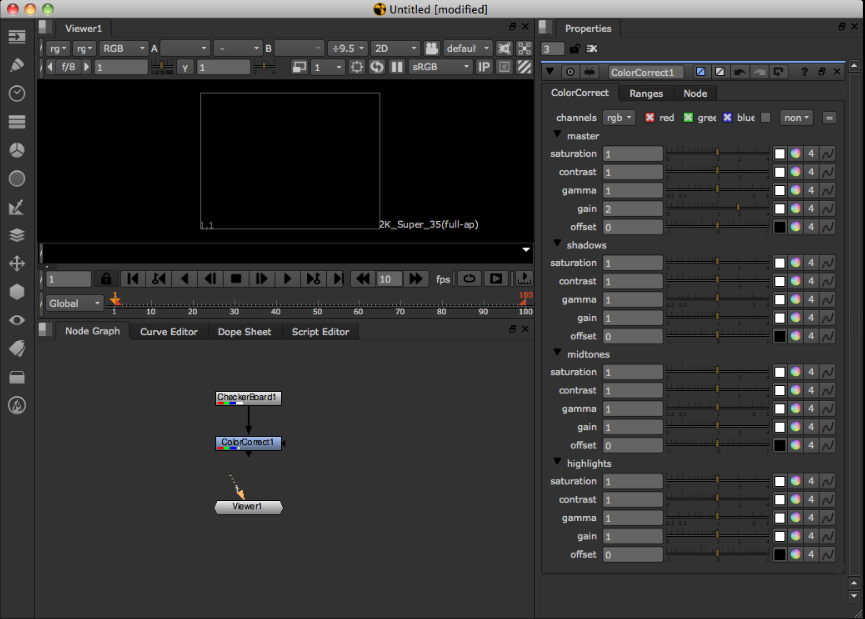

Imagine a simple image processing graph (DAG) in NUKE, consisting of three nodes. The first is a CheckerBoard, the second a ColorCorrect operation, the third a Viewer node.

You can think of these three nodes as representing the fundamental three types of node in NUKE. 1. a generator or input node ( CheckerBoard ). 2. a Filter or modifier node ( the ColorCorrect ). 3. An output node ( the Viewer ).

At this stage in the NUKE Viewer there is no image present. That is because the Viewer node is not connected to the node graph.

Because there is no image present, no image processing or image loading has occurred.

The only time image processing occurs is when an output node asks its input nodes for an image. This is known as a ‘pull’ system.

An output node can be considered the bottom node in the graph that is going to produce output on either the screen ( a Viewer ) or written out to disk ( a Write node ).

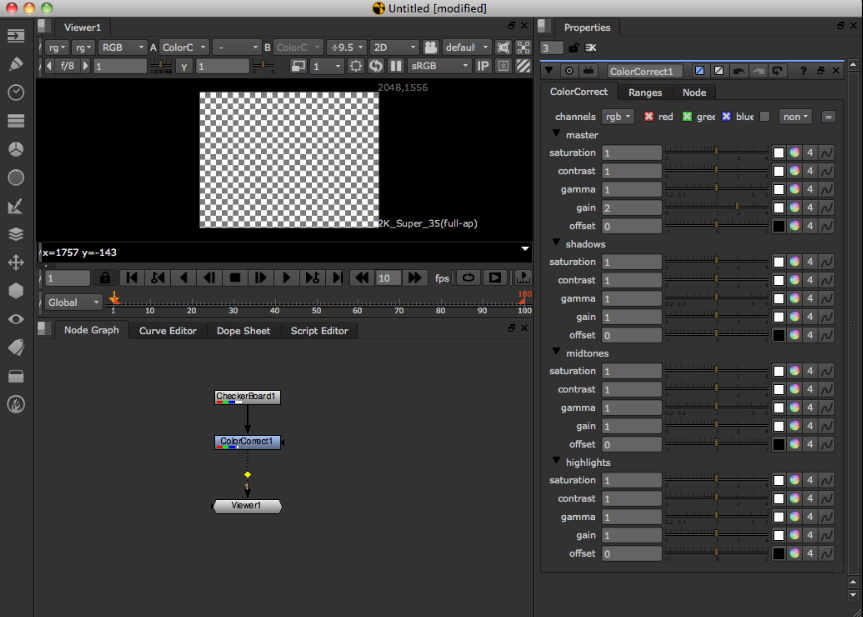

In the screen-shot above the user has connected the Viewer to the ColorCorrect operation.

At this stage the Viewer node needs to produce an output picture. In order to produce an image it asks its input node for an image ( e.g. the ColorCorrect ). The ColorCorrect also now needs to produce an image. Again, it needs to ask its input ( the CheckerBoard ) for an image. The CheckerBoard, having no inputs and being a generator of images does’t need to ask its inputs, so it just returns an image to the ColorCorrect, which in term modifies the image and returns it to the Viewer and the processing tree is complete.

Fundamental image processing unit - the Row¶

As described earlier, NUKE is a scan-line based system. This means according to NUKE the fundamental unit for image processing is a scan-line. The scan-line is one horizontal line of the image. In the NDK a scan-line is known as a Row.

NUKE always operates on the level of rows when processing images. Knowing this then, our processing of an image in our simple three node tree looks now something like this:

- The Viewer needs to display an image of 640x480 resolution.

- It splits the image up into 480 rows

- It asks its input, the ColorCorrect, for a Row at a time

- The ColorCorrect asks its input for a Row at a time

- The CheckerBoard generates a row at a time and returns it to the ColorCorrect

- The ColorCorrect modifies each Row and returns it to the Viewer

This architecture is the main reason NUKE can deal with almost unlimited image sizes. Image processing is limited to Row size chunks, therefore the whole image does not need to reside in the computer memory at once.

The Viewer and large image sizes¶

When displaying a large image on the Viewer screen there are typically less rows displayed on the screen then actual rows in the full image. For instance an image that is 4k or 3112 rows high that is displayed a box on the screen of 800 rows high. When this occurs the Viewer does not ask its input for every Row to produce the output image as they are not required for display on the screen. In other words it skips rows.

- Viewer needs to display an image of 4k resolution in a 800 high pixel box

- It splits the image up into rows and asks its input for these rows skipping ones it doesn’t need. For example Row 0, 4, 8, 12, 16 etc

- For each row ( 0,4,8,12, etc ) the ColorCorrect asks its input for a row, e.g. 0,4,8,12

- CheckerBoard generates 0,4,8,12 rows

This is one of the reasons NUKE is fast and interactive even when dealing with large image sizes.

Also this strategy for dealing with large image sizes means that rows are always computed full resolution horizontally. That is once they are computed they are correct no matter what the zoom level on the image in the Viewer. In other words when the user zooms in, the Viewer only needs to ask its inputs for rows it hasn’t ask for yet.

Multi-threading¶

When producing the output image the output node typically runs multiple threads to fetch multiple rows from its input at the same time.

For instance on a 4 core system the Viewer will launch 4 threads to each independently process a Row of input at time. This can be seen in the NUKE UI by two white lines. One is the top-most thread processing processing a Row and the other the bottom-most thread. The threads are scheduled in a very simple first come first served manner.

For instance processing a 480 row image with no line skipping the Viewer will typically run the first 4 threads working on Row 0-3 first. As each thread finishes it will be given the next available Row to work on until the whole image is complete.

In general a processing operation will not create its own threads for processing but will be run on one of the output node processing threads as it is asked to generate a Row. NUKE has internal synchronisation that guarantees only one thread will be operating on one Row of one node at a time.

The Row Cache¶

Typically when doing image processing some operators when asked to produce their Row of output actually need more that one Row of input to produce the output Row.

For instance a Box Blur for each output Row may need many input rows.

For example in our 3 node tree imagine we added a Blur node after the ColorCorrect. In this case for each Row of output for the Blur there is overlap of rows that are required from the ColorCorrect. In other words some rows in the ColorCorrect are asked for multiple times by the Blur.

In order to save from the same rows being computed multiple times NUKE will generally create a cache for those rows. In this case the first time a Row is asked for from ColorCorrect the Row is actually copied into a cache. The next time the Blur asks for the same row instead of being computed again it is returned from the cache.

The Row Cache is the thing that is described in the NUKE UI as the ‘image buffers’. It can be cleared by pressing F12 and it maximum memory size is controlled in the preferences. The Row cache is an in memory buffer, it is not generally written to disk so therefore does not survive between runs of NUKE.

Note

There is one node that actually writes the Row Cache to disk, this is called the ‘disk cache node’. It writes or reads any rows that it been asked for to disk.

Tiles¶

Often when performing image calculations your image processing engine will need to access more than just one Row from its input to produce its output Row. In order to do that NUKE has a concept of a Tile.

A Tile has accessor functions on it that allow you to access pixels as a 2D dimensional array of the given tile size.

It is important to note that the fundamental unit of image processing in NUKE is still a Row. When a Tile is created for an input Op a cache is created ( if one didn’t exist already ) and then NUKE fills the rows required on that input Op to fill the Tile. Those rows are then locked in the NUKE cache for the existance of the Tile object. You then can use accessor functions on the Tile into the internal rows in the cache for that input.

This also means when you have multiple threads all creating Tiles that overlap, quite often when the Tile is created many of the rows are already in the cache for that Tile and only minimal extra processing occurs.

Channels¶

NUKE handles image colors as colors planes which are known in NUKE as Channels.

Channels describe one floating-point component of all Pixels in an image. A typical Channel would Red. Channel data is stored packed into arrays of 32-bit float, describing the value of that channel for every pixel in a Row.

Multiple Channels make a Layer. A Layer assigns meaning to the channels in it, for example the predifined rgb layer contains the channels r, g, and b. so operators can know that b is the blue channel of the rgb layer.

Most often you will access channels in NUKE by using the special macro ‘foreach’ will will loop of a set of Channels ( ChannelSet ) giving you each Channel

foreach( channel, channelSet ) {

float *outputPixel = row.writable(channel);

}

The above example loops through all the channels in a channelset and then gets the output pixel pointer for each channel.

The Viewer Cache¶

It is worth mentioning here that there is another cache apart from the Row cache that is used in NUKE. It is described in the preferences and UI as the disk cache. The Viewer cache is a file written to disk for each frame as its displayed in the Viewer. The Viewer cache file is not used for image processing and is separate to the Row cache. Its primary purpose is quick display of images in the Viewer for playback and viewing. It does survive runs of NUKE as its written to disk.

When the Viewer as an output node needs to produce an output image for display it first checks to see if any rows that it needs to display are in the Viewer cache on disk. If so it just displays them rather than asking its input for the rows. For any rows that are missing they are asked for from the Viewer input as usual.

Memory¶

When the Row cache memory sizes exceeds the settings described in the preferences NUKE searches the Row cache and frees as many rows as required to get NUKE under the memory limit + plus around 10% for headroom. Typically the algorithm to decide on which rows get freed first is a combination of request count, row access count, and op ‘slowness’. Any rows that are ‘locked’ by having an Interest or Tile on them cannot be freed by NUKE.

Any user plugin that needs to allocate its own temporary buffers must make sure they allocate and register themselves for out of memory conditions with the DDImage::Memory functions.

Note

Other items apart from rows may also contribute to memory usage and trigger a memory free event. For instance, 3D geometry and any user allocated memory registered with DDImage.

Iop Call Order¶

Construction, knob handling and destruction of Iops is inherited directly from the Op class, as introduced in the Fundamental Concepts chapter, and discussed in detail in the Architecture section.

_validate¶

- void Iop::_validate(bool None)¶

As described in the Architecture chapter, validation consists of both Op level common, and sub-class dependant functionality. In the case of Iop and its children, _validate is responsible for setting up “IopInfo.”

The IopInfo contains various fields:

- IopInfo subclasses from the Box class. This is a simple bounding box and represents the area in which the Op has pixels, including any overscan which is available. This is displayed in the Viewer as the bounding box (or bbox).

- Two formats. One is the “fullSizeFormat”, and one is the regular “format”. In non-proxy mode these are the same, but in proxy mode the original, unscaled format is stored in “fullSizeFormat”, and the effective format as used by proxying is stored in “format.” The format is the region to which the bounding box is clipped when displayed in the Viewer.

- channels. These are the channels for which pixel data is defined. Attempts to fetch Channels from Iops which do not have these channels will result in zeroes.

- first_frame/last_frame. These are the frame range for which the Iop is defined. For example, a Read node will fill in.

- black_outside. By default NUKE edge-extends Iops when you attempt to access them outside their bounding box. This is not always desirable, so Ops can set ‘black_outside’. This is slightly confusing, in that rather than changing the default extension behaviour, it instead means that the pixel data has been padded with an extra layer of black pixels (and the bbox padded similarly), and therefore for use of the bbox as numbers it will strip out that extra padding.

- ydirection indicates the preferred y order. A negative value indicates that the preferred access order is from the top (high y) to the bottom (low y), a positive value indicates that the preferred access order is the other way round.

_validate is called on the main thread. It should avoid doing excessive calculations, as it will block the UI during its call. In particular, it should avoid calculating the image on the input, as this can take arbitrarily long.

The Iop has another field that can be set in _validate(), namely “out_channels_”. This defaults to Mask_All, and has no effect. If it’s set to something smaller then NUKE can, as an optimisation, skip calling _request and engine() on this Iop entirely, and go straight to its input Iop (by default input(0), but this can be changed by setting raw_channels_from). This is not guaranteed, and implementations of engine() should still be able to deal with being called for channels not in out_channels_.

During _validate you can assume that the Op has been built for the right frame and view. outputContext() is valid and the knobs have been stored via knobs().

_request¶

- void Iop::_request(int x, int y, int r, int t, ChannelMask None, int count)¶

_request() is called by NUKE on your Iop to indicate the region and channels of interest. In turn your Iop should indicate the region and channels of interest by calling request() on its inputs, as appropriate. It is important where possible to keep the region of interest down to the minimum necessary, as NUKE will potentially calculate and cache the full region of interest.

In a complex tree, NUKE might call _request on your Iop multiple times. Each time it passes the cumulative region and channels of interest.

_request() is usually handled in the main (UI) thread, and, like _validate() should avoid taking too long.

The parameter count is a hint to the caching, and indicates how many overlapping requests have been made. When calling request() on your inputs, you can specify a value greater than one to force it to cache the data on this input.

open¶

- void Iop::open()¶

_open is called immediately prior to the first engine() call on an Iop, after it has been _request()ed. Like engine() it is called on a worker thread, and so therefore can take any amount of time without causing the UI to block excessively. Calls to open() are locked so that only one will happen, and this will be complete by the time other calls to engine() begin.

Unlike validate/request/engine _open does not need to and should not recursively call its inputs.

engine (/pixel_engine/draw_engine)¶

- void Iop::engine(int y, int l, int r, ChannelMask channels, Row& row)¶

The engine function is responsible for actually operating on the image data. The engine type called depends on the Iop type, with engine() relevant to Iops, pixel_engine() relevant to PixelIops and draw_engine() to DrawIops. In this section we’ll explicitly discuss engine(), however the topics covered are relevant to all Iop derived classes and their engine methods.

engine() is called by NUKE on your Iop as the function which actually processes image data. This is called on the worker threads, and therefore should be thread-safe against itself, and not make large number of calls. In general it should not call functions on knobs, particularly those to set or get values, as these calls are not thread-safe and may result in crashing. Any calculations based on knob values it requires should have already taken place in _validate, and result in fields within the Iop being set that engine() can later refer to.

It is passed in coordinates representing a segment of a scanline, and a set of channels, and a row is passed by reference. It is supposed to set up the row so that all the channels are defined in the range x ... r. This value will previously have been set.

A trivial implementation of engine is as follows:

void engine(int, int, int, ChannelMask channels, Row& row) {

row.erase(channels);

}

This blanks all the channels. Blanking must be done explicitly, as the state of Row is not defined to be empty initially. Another example, that fills in all the pixels with the value 1, is as follows:

void engine(int y, int l, int r, ChannelMask channels, Row& row) {

foreach(z, channels)

{

float* out = row.writable(z);

for (int x = l ; x < r ; x++)

{

out[x] = 1.0f;

}

}

}

We see here the use of writable(Channel), which obtains a pointer to the row’s internal buffer for that channel, which the calling code can write into. writable(Channel) is based so that it is valid between the left and right extents of the Row (specified in its constructor, which has been run by the code which calls engine). It is not always valid to simply deference writable(Channel), as x may be higher than 0.

engine() can also fetch data from the input. For example, a simple passthrough operator would have this engine:

void engine(int y, int l, int r, ChannelMask channels, Row& row) {

row.get(input0(), y, l, r, channels);

}

If it is possible to write your operator as a simple in-place modification of its input, it is recommended to do so, as this leads to more efficient memory use. For, example, a simple gain would end up being:

void engine(int y, int l, int r, ChannelMask channels, Row& row) {

row.get(input0(), y, l, r, channels);

foreach(z, channels)

{

if (z == Chan_Red || z == Chan_Blue || z == Chan_Green) {

const float* in = row[z];

float* out = row.writable(z);

for (int x = l ; x < r ; x++)

{

out[x] = 1.0f;

}

}

}

}

For more details about how to fetch image data in engine, please see [section]. Also, various subclasses of and helper classes for Iop exist that are primarily to remove boilerplate within engine and add extra functionality.

close¶

- void Iop::_close()¶

_close is called some time after rendering has finished (for the moment). This cannot be relied upon to always happen when rendering finishes, but if _close is called it should be safe to .

If NUKE decides that it needs to do more work, it will re-call open(). Unlike validate/request/engine _close does not need to, and should not, recursively call its inputs.

Call Safety¶

All the functions above are called on the main or UI thread except the engine call and open call, which are called from worker threads.

You must ensure that any call on the main thread executes as quickly as possible to prevent any unwanted delays in the user interface.

Calls such as validate, request, invalidate, and UI or knob functions cannot be called from the engine call or a worker thread. Doing so is not thread safe and will result in instabilty.

- In general the following can be used from within an engine call:

- The Row class

- The Interest class

- The Tile class

- The Pixel class

- ChannelSet and other channel functions

- Calling the progress op functions

- Calling error, or warning on an op

Formats & Bounding Boxes: RODs & ROIs¶

_validate() produces as a side-effect the region of definition (in pixel coordinates) for an Iop, and places it into the IopInfo.

It also should produce two formats, being the “full size format” (ignoring any downrez/proxy) and the “format” (which takes into account any downrez/proxy).

A simple implementation, that just copies the (sole) input’s IopInfo is as follows:

void _validate(bool forReal)

{

copy_info(0);

}

The default implementation also calls merge_info() to merge in the bboxes and channels of the other inputs.

NUKE then will call _request to indicate the region of interest. This should call request() on all its inputs as necessary, with that Op’s region of interest on those inputs. For example, a blur might wish to implement this like so:

void _request(int x, int y, int r, int t, ChannelMask channels, int count) { input(0)->request(x - _size[0], y - _size[1], r + _size[0], t + _size[1], channels, count); }

NUKE clips the regions in its implementation of request() that forwards to the plugin’s function _request(), so that you will never see a request that is larger than your bbox as you specify in _validate(), and you can happily request larger boxes than your info bboxes.

Coordinate System¶

NUKE coordinate system are internally pixel-based based with 0,0 being the lower left. Support for non-one pixel aspect ratios and proxy-scaling/downrezzing exist and are generally transparent, with the user seeing coordinates that are properly canonical coordinates, but the code generally seeing pixel coordinates.

The pixel aspect ratio is a property of the image format, rather than the data itself. When the knobs are being stored, NUKE will take into account the pixel aspect ratio for certain types of knobs. For example, suppose there is a Blur node, which has a “size” parameter, as follows:

float size[2];

Blur() {

_size[0] = _size[1] = 10;

}

void knobs(Knob_Callback f) {

WH_knob(f, _size, "size");

}

With square pixels and without being in any proxy mode, the size will end up being set to (10,10). If the pixels are twice the width they are high, then to achieve a blur that appears the same vertically as horizontally, we should only blur by half the number of pixels horizontally as we are doing vertically. Therefore, the call to WH_Knob() takes the value of input_format().pixel_aspect() into account in this case, and would result in _size being set to (5, 10) rather than (10, 10) in this case.

Proxy scaling is handled in a similar manner, except that proxy scales are passed in through the OutputContext (ie go from the bottom upwards, rather than coming from the top downwards, like pixel aspect ratios). In this example, if the OutputContext() was set to downrez by 2, then then _size would end up being (5, 5). The effect is multiplicative, so that if the pixel aspect ratio is 2:1 in addition to the downrez being 2, then _size would be (2.5, 5).

This sort of automatic downrezzing happens with the knobs() call, on certain knobs: those dealing with 2D space. These are

- BBox_knob

- Transform2d_knob

- WH_Knob

- XY_knob

It is possible to turn this off on a per-knob basis by setting the NO_PROXYSCALE flag on a knob. This applies only to the scaling from the OutputContext: that from the pixel aspect ratio always takes place. If you wish to do the equivalent on data not in those types of knobs, you can use the OutputContext::to_proxy and OutputContext::from_proxy functions to do this manually.